#CODE!!!! check

Text

oh and i've decided i really wanna be a game designer

#the last two years have been just OK I WANNA BE A TECH PERSON BUT i HAVE NO IDEA WHAT *KIND* OF TECH PERSON I WANNA BE#and i knew i wanted a creative career where i can still have fun and code#and then it just HIT ME#I wanna make games#it's everything i want!#creativity? check#CODE!!!! check#still something my parents would be cool with me doing? check!#also it helps that i literally grew up on gaming and being so fascinated by games in every way#like it's embarrassing that it took me this long to figure out that i want to do game design#the only problem is the ungodly long hours and crunching#but like that would've happened regardless of what tech related career i wanted#so might as well have creative fulfillment after a long week of working

4 notes

·

View notes

Text

“Humans in the loop” must detect the hardest-to-spot errors, at superhuman speed

I'm touring my new, nationally bestselling novel The Bezzle! Catch me SATURDAY (Apr 27) in MARIN COUNTY, then Winnipeg (May 2), Calgary (May 3), Vancouver (May 4), and beyond!

If AI has a future (a big if), it will have to be economically viable. An industry can't spend 1,700% more on Nvidia chips than it earns indefinitely – not even with Nvidia being a principle investor in its largest customers:

https://news.ycombinator.com/item?id=39883571

A company that pays 0.36-1 cents/query for electricity and (scarce, fresh) water can't indefinitely give those queries away by the millions to people who are expected to revise those queries dozens of times before eliciting the perfect botshit rendition of "instructions for removing a grilled cheese sandwich from a VCR in the style of the King James Bible":

https://www.semianalysis.com/p/the-inference-cost-of-search-disruption

Eventually, the industry will have to uncover some mix of applications that will cover its operating costs, if only to keep the lights on in the face of investor disillusionment (this isn't optional – investor disillusionment is an inevitable part of every bubble).

Now, there are lots of low-stakes applications for AI that can run just fine on the current AI technology, despite its many – and seemingly inescapable - errors ("hallucinations"). People who use AI to generate illustrations of their D&D characters engaged in epic adventures from their previous gaming session don't care about the odd extra finger. If the chatbot powering a tourist's automatic text-to-translation-to-speech phone tool gets a few words wrong, it's still much better than the alternative of speaking slowly and loudly in your own language while making emphatic hand-gestures.

There are lots of these applications, and many of the people who benefit from them would doubtless pay something for them. The problem – from an AI company's perspective – is that these aren't just low-stakes, they're also low-value. Their users would pay something for them, but not very much.

For AI to keep its servers on through the coming trough of disillusionment, it will have to locate high-value applications, too. Economically speaking, the function of low-value applications is to soak up excess capacity and produce value at the margins after the high-value applications pay the bills. Low-value applications are a side-dish, like the coach seats on an airplane whose total operating expenses are paid by the business class passengers up front. Without the principle income from high-value applications, the servers shut down, and the low-value applications disappear:

https://locusmag.com/2023/12/commentary-cory-doctorow-what-kind-of-bubble-is-ai/

Now, there are lots of high-value applications the AI industry has identified for its products. Broadly speaking, these high-value applications share the same problem: they are all high-stakes, which means they are very sensitive to errors. Mistakes made by apps that produce code, drive cars, or identify cancerous masses on chest X-rays are extremely consequential.

Some businesses may be insensitive to those consequences. Air Canada replaced its human customer service staff with chatbots that just lied to passengers, stealing hundreds of dollars from them in the process. But the process for getting your money back after you are defrauded by Air Canada's chatbot is so onerous that only one passenger has bothered to go through it, spending ten weeks exhausting all of Air Canada's internal review mechanisms before fighting his case for weeks more at the regulator:

https://bc.ctvnews.ca/air-canada-s-chatbot-gave-a-b-c-man-the-wrong-information-now-the-airline-has-to-pay-for-the-mistake-1.6769454

There's never just one ant. If this guy was defrauded by an AC chatbot, so were hundreds or thousands of other fliers. Air Canada doesn't have to pay them back. Air Canada is tacitly asserting that, as the country's flagship carrier and near-monopolist, it is too big to fail and too big to jail, which means it's too big to care.

Air Canada shows that for some business customers, AI doesn't need to be able to do a worker's job in order to be a smart purchase: a chatbot can replace a worker, fail to their worker's job, and still save the company money on balance.

I can't predict whether the world's sociopathic monopolists are numerous and powerful enough to keep the lights on for AI companies through leases for automation systems that let them commit consequence-free free fraud by replacing workers with chatbots that serve as moral crumple-zones for furious customers:

https://www.sciencedirect.com/science/article/abs/pii/S0747563219304029

But even stipulating that this is sufficient, it's intrinsically unstable. Anything that can't go on forever eventually stops, and the mass replacement of humans with high-speed fraud software seems likely to stoke the already blazing furnace of modern antitrust:

https://www.eff.org/de/deeplinks/2021/08/party-its-1979-og-antitrust-back-baby

Of course, the AI companies have their own answer to this conundrum. A high-stakes/high-value customer can still fire workers and replace them with AI – they just need to hire fewer, cheaper workers to supervise the AI and monitor it for "hallucinations." This is called the "human in the loop" solution.

The human in the loop story has some glaring holes. From a worker's perspective, serving as the human in the loop in a scheme that cuts wage bills through AI is a nightmare – the worst possible kind of automation.

Let's pause for a little detour through automation theory here. Automation can augment a worker. We can call this a "centaur" – the worker offloads a repetitive task, or one that requires a high degree of vigilance, or (worst of all) both. They're a human head on a robot body (hence "centaur"). Think of the sensor/vision system in your car that beeps if you activate your turn-signal while a car is in your blind spot. You're in charge, but you're getting a second opinion from the robot.

Likewise, consider an AI tool that double-checks a radiologist's diagnosis of your chest X-ray and suggests a second look when its assessment doesn't match the radiologist's. Again, the human is in charge, but the robot is serving as a backstop and helpmeet, using its inexhaustible robotic vigilance to augment human skill.

That's centaurs. They're the good automation. Then there's the bad automation: the reverse-centaur, when the human is used to augment the robot.

Amazon warehouse pickers stand in one place while robotic shelving units trundle up to them at speed; then, the haptic bracelets shackled around their wrists buzz at them, directing them pick up specific items and move them to a basket, while a third automation system penalizes them for taking toilet breaks or even just walking around and shaking out their limbs to avoid a repetitive strain injury. This is a robotic head using a human body – and destroying it in the process.

An AI-assisted radiologist processes fewer chest X-rays every day, costing their employer more, on top of the cost of the AI. That's not what AI companies are selling. They're offering hospitals the power to create reverse centaurs: radiologist-assisted AIs. That's what "human in the loop" means.

This is a problem for workers, but it's also a problem for their bosses (assuming those bosses actually care about correcting AI hallucinations, rather than providing a figleaf that lets them commit fraud or kill people and shift the blame to an unpunishable AI).

Humans are good at a lot of things, but they're not good at eternal, perfect vigilance. Writing code is hard, but performing code-review (where you check someone else's code for errors) is much harder – and it gets even harder if the code you're reviewing is usually fine, because this requires that you maintain your vigilance for something that only occurs at rare and unpredictable intervals:

https://twitter.com/qntm/status/1773779967521780169

But for a coding shop to make the cost of an AI pencil out, the human in the loop needs to be able to process a lot of AI-generated code. Replacing a human with an AI doesn't produce any savings if you need to hire two more humans to take turns doing close reads of the AI's code.

This is the fatal flaw in robo-taxi schemes. The "human in the loop" who is supposed to keep the murderbot from smashing into other cars, steering into oncoming traffic, or running down pedestrians isn't a driver, they're a driving instructor. This is a much harder job than being a driver, even when the student driver you're monitoring is a human, making human mistakes at human speed. It's even harder when the student driver is a robot, making errors at computer speed:

https://pluralistic.net/2024/04/01/human-in-the-loop/#monkey-in-the-middle

This is why the doomed robo-taxi company Cruise had to deploy 1.5 skilled, high-paid human monitors to oversee each of its murderbots, while traditional taxis operate at a fraction of the cost with a single, precaratized, low-paid human driver:

https://pluralistic.net/2024/01/11/robots-stole-my-jerb/#computer-says-no

The vigilance problem is pretty fatal for the human-in-the-loop gambit, but there's another problem that is, if anything, even more fatal: the kinds of errors that AIs make.

Foundationally, AI is applied statistics. An AI company trains its AI by feeding it a lot of data about the real world. The program processes this data, looking for statistical correlations in that data, and makes a model of the world based on those correlations. A chatbot is a next-word-guessing program, and an AI "art" generator is a next-pixel-guessing program. They're drawing on billions of documents to find the most statistically likely way of finishing a sentence or a line of pixels in a bitmap:

https://dl.acm.org/doi/10.1145/3442188.3445922

This means that AI doesn't just make errors – it makes subtle errors, the kinds of errors that are the hardest for a human in the loop to spot, because they are the most statistically probable ways of being wrong. Sure, we notice the gross errors in AI output, like confidently claiming that a living human is dead:

https://www.tomsguide.com/opinion/according-to-chatgpt-im-dead

But the most common errors that AIs make are the ones we don't notice, because they're perfectly camouflaged as the truth. Think of the recurring AI programming error that inserts a call to a nonexistent library called "huggingface-cli," which is what the library would be called if developers reliably followed naming conventions. But due to a human inconsistency, the real library has a slightly different name. The fact that AIs repeatedly inserted references to the nonexistent library opened up a vulnerability – a security researcher created a (inert) malicious library with that name and tricked numerous companies into compiling it into their code because their human reviewers missed the chatbot's (statistically indistinguishable from the the truth) lie:

https://www.theregister.com/2024/03/28/ai_bots_hallucinate_software_packages/

For a driving instructor or a code reviewer overseeing a human subject, the majority of errors are comparatively easy to spot, because they're the kinds of errors that lead to inconsistent library naming – places where a human behaved erratically or irregularly. But when reality is irregular or erratic, the AI will make errors by presuming that things are statistically normal.

These are the hardest kinds of errors to spot. They couldn't be harder for a human to detect if they were specifically designed to go undetected. The human in the loop isn't just being asked to spot mistakes – they're being actively deceived. The AI isn't merely wrong, it's constructing a subtle "what's wrong with this picture"-style puzzle. Not just one such puzzle, either: millions of them, at speed, which must be solved by the human in the loop, who must remain perfectly vigilant for things that are, by definition, almost totally unnoticeable.

This is a special new torment for reverse centaurs – and a significant problem for AI companies hoping to accumulate and keep enough high-value, high-stakes customers on their books to weather the coming trough of disillusionment.

This is pretty grim, but it gets grimmer. AI companies have argued that they have a third line of business, a way to make money for their customers beyond automation's gifts to their payrolls: they claim that they can perform difficult scientific tasks at superhuman speed, producing billion-dollar insights (new materials, new drugs, new proteins) at unimaginable speed.

However, these claims – credulously amplified by the non-technical press – keep on shattering when they are tested by experts who understand the esoteric domains in which AI is said to have an unbeatable advantage. For example, Google claimed that its Deepmind AI had discovered "millions of new materials," "equivalent to nearly 800 years’ worth of knowledge," constituting "an order-of-magnitude expansion in stable materials known to humanity":

https://deepmind.google/discover/blog/millions-of-new-materials-discovered-with-deep-learning/

It was a hoax. When independent material scientists reviewed representative samples of these "new materials," they concluded that "no new materials have been discovered" and that not one of these materials was "credible, useful and novel":

https://www.404media.co/google-says-it-discovered-millions-of-new-materials-with-ai-human-researchers/

As Brian Merchant writes, AI claims are eerily similar to "smoke and mirrors" – the dazzling reality-distortion field thrown up by 17th century magic lantern technology, which millions of people ascribed wild capabilities to, thanks to the outlandish claims of the technology's promoters:

https://www.bloodinthemachine.com/p/ai-really-is-smoke-and-mirrors

The fact that we have a four-hundred-year-old name for this phenomenon, and yet we're still falling prey to it is frankly a little depressing. And, unlucky for us, it turns out that AI therapybots can't help us with this – rather, they're apt to literally convince us to kill ourselves:

https://www.vice.com/en/article/pkadgm/man-dies-by-suicide-after-talking-with-ai-chatbot-widow-says

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/04/23/maximal-plausibility/#reverse-centaurs

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#ai#automation#humans in the loop#centaurs#reverse centaurs#labor#ai safety#sanity checks#spot the mistake#code review#driving instructor

713 notes

·

View notes

Text

pocari

#art#illustration#aesthetic#pocari sweat#done in collaboration with pocari sweat#check my instagram for a 20% discount code on the drink!!

3K notes

·

View notes

Text

okay last one for the night but. honestly i really hate how the franchise has been using loyalty to Rick as a shield for so long. If Rick was involved in a project or not doesn't matter, especially not anymore.

ReadRiordan and the publishing for the franchise has been using this tactic for ages - they obscure if any writing related to the series wasn't written by Rick unless it's special circumstances. It's near impossible to find out who the ghostwriters are (Stephanie True Peters and Mary-Jane Knight). TSATS was promoted as the first time we got a non-Riordan (Rick or Haley) author working on one of the companion novels despite having seven already existing ghostwritten books in the series. The only reason Mark Oshiro was emphasized so heavily for TSATS was because they also work as a sensitivity reader for topics such as queer identity, and Rick had received backlash in the past for being a Straight Cis Old White Guy repeatedly falling into bad habits (that he hasn't broken out of) with certain characterizations that he kept doubling-down on or retconning into oblivion. The show emphasizes that Rick was involved, but the LA Times article brings into question exactly how much he was involved, and it doesn't even really matter either way. The ReadRiordan site actively avoids putting any writing credits on their articles (or art credits...) or anywhere on their site.

Practically the entire fandom unanimously agrees the musical - which had zero involvement from Rick - is the best adaptation of the series so far, including the TV show. Some of the best writing to come out of the series recently was the stuff ghostwritten by Stephanie True Peters (Camp Half-Blood Confidential, Camp Jupiter Classified, Nine from the Nine Worlds, etc). And yet when promotional stuff is posted about CHB:C, there's clearly coded language used to hide the fact that Rick himself didn't write it. Yes, that's how ghostwriters work, but at this point we should really stop pretending "Rick Riordan" isn't just a pen name for a group of authors like "Erin Hunter" and that Rick is actually writing everything in the series. I can easily look up and see which Animorphs books were ghostwritten, and who those authors were. I can find every "Erin Hunter" easily listed on official sites. And yet most people don't even know the Riordanverse franchise has ghostwriters at all.

And the franchise is still trying to use the "Tio/Uncle Rick" stuff. Author loyalty and marketing parasocial relationships isn't going to save the franchise when the author himself can't hold up his own original themes or even keep basic series bible details straight, and especially not if the editors are barely if at all doing their job. And please at least get a goddamn series bible by this point.

#pjo#riordanverse#rick riordan#readriordan#pjo tv crit#rr crit#< this isnt just rr crit im coming for the whole brand#readriordan site also cant center their webpage footer properly. thats just kind of sad#@readriordan staff make sure your </center> code has the closing angle bracket in the right spot. check for spaces.#mary-jane knight#stephanie true peters#< more self organization tags cause i like talking about the ghostwriters. unsung heroes#long post //

968 notes

·

View notes

Text

robert chase one of the characters of all time. hes blonde. he went to seminary school. he purposefully murdered a patient. he’s a vapid slut. allergic to strawberries. was caption of his college bowling team. desperately needs to be on antidepressants. he’s divorced. his ex-wife was/is in love with his dadboss. it’s heavily implied that this is part of why he married her to begin with. he’s been fired multiple times but he keeps coming back like a fucked-up obedient boomerang. he’s the best surgeon in the hospital. all this while having the personality of a sopping wet cardboard box of corn flakes that somebody poured milk into and let mildew.

#the concept for chase was#‘what if house had like. a surrogate son. and he kind of wanted to fuck him and also hes like catholic’#‘ohhh and he can be australian!’#‘why would he be australian?’#‘just cuz’#house md#robert chase#beautiful loser very virgin mary coded man#i do like chase but i find it amusing that i also find him boring#bc objectively theres no reason he should be?#hes a great character i love his story arcs i love how sarcastic he is i love how hes doomed to repeat houses fate#but compared to say. foreman? there is NOTHING#im very sure ill manage to gaslight myself into loving him later#dr robert chase#i didnt actually fact check the bowling thing. dont quote me its late

1K notes

·

View notes

Text

the way dean started walking them away the moment he noticed the guy checking sam’s butt out…protective alpha behavior <3

#this scene is so a/b/o coded it’s insane#and dean’s eye contact with the dude tho?#he really went “you wanna check out my sammy’s ass? well too bad douchebag it’s mine”#wincest#samdean#sam winchester#dean winchester#spn

361 notes

·

View notes

Text

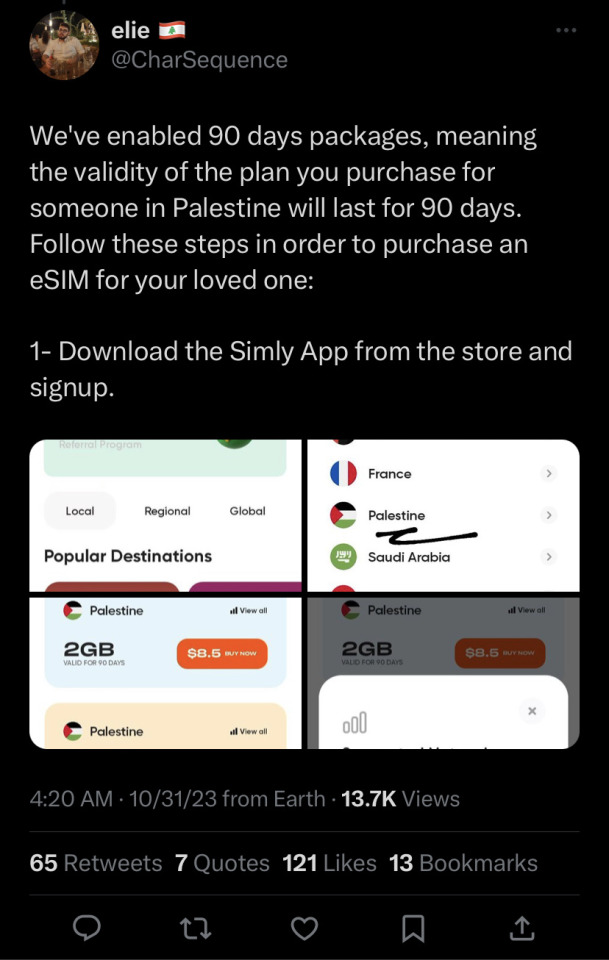

Mirna_elhelbawi and a team of people are still working at getting esims to Gazans, if you are able please consider buying a plan and send it to the email listed above and on her Instagram. They have been working tirelessly for the past days to ensure that Palestinians can contact their friends and families amid the continued displacement and horrors they are experiencing minute by minute. Last I heard these plans people were still able to access internet when israel cut it and all communications a couple days ago and it is vital as some areas are still experiencing difficulties. Please check out her page for any updates as they continue to post more and answer questions 🙏🇵🇸

UPDATE: Mirna has asked that we stop for the time being on sending them E-sims, but that doesn’t mean they won’t start up again in the future! Please feel free to keep reblogging for the info and please continue to check her account for updates!!

NEWEST UPDATE: Mirna and her team are back to taking E-Sim donations! Please check out her Instagram for any updates

#i used simly and the process was very fast#i am still waiting for them to give my code to someone but they have been doing incredible work trying to connect not only journalists#but everyone#please check her Instagram for any updates as I know nomad stopped working in Palestine recently#free palestine#from the land to the sea#boost

414 notes

·

View notes

Text

yeah so i'm falling for @weevmo's Guys... they're so neat! i dig their vibes and can't wait to see what Corduroy Stew is all about <3

#fuckkkkk theyre so SHAPED!!!#i can feel myself getting So attached#if anything happens to them... i will simultaneously wail and cheer#nimrod is so cute. nimmy. the nimster. id love to toss birdseed on the ground for him to peck at. pigeon coded tiny man#lulu being a sock puppet trainer THATS SUCH A NEAT IDEA ARE YOU KIDDING IM OBSESSED#now im curious as to if sock puppets are like Animals or if they're as sapient as the others...#but yes im already looking directly at lulu she seems Fun as fuck#oughhhhh and wb. listen. Listen. im a sucker for handsome Head Empty characters and he seems like one of those#i also like characters with many hidden internal problems and wb seems like one of those too <3#i hear that the three of them are siblings and that is Such catnip to me...#scribble garnish#dont know what else to tag this as! huh!#BUT YEAH THIS ALL SEEMS NEAT THEYRE COOL i Will be following this project to see where it goes#so far it has great characters a sprinkling of Intrigue bomb art (which. ofc it does its weevmo cmon guys)#and a neat song that i sweAR TO FUCK REMINDS ME OF SOMETHING. THIS HAS BEEN BOTHERING ME FOR DAYS#the jingle is just vaguely familiar enough to make me Writhe in the agony of Unknown Knowns#but yes! i hope you all go check out weevmo's blog if you havent already! Good Stuff All Around!!

317 notes

·

View notes

Text

Page 23 oooh boy

Previous - next- first

#my art#fnaf#five nights at freddy's#security breach#fnaf au#into the ballpit au#michael afton#gregory#evan afton#crying child#fazbear frights#into the pit#y’all are gonna want to check that code btw there’s binary code translators on google#ewe

2K notes

·

View notes

Note

im shaking, im cackli g so much at the artwork

caesar NOT BORINB

fence SHUICHISCUTE

spiral YOU ARE MY FAVORITE

*triumphant trumpet noises*

🎊🎊🎊 !!we have a winner!! 🎊🎊🎊

*there is now confetti everywhere. you'll be finding confetti stuck to your socks for the next few weeks. confetti is what your life has become.*

solved the codes in the draw- here's the prize!

#a doodle for thee....#ask maiora#kokichi ouma#drv3 DICE#saiouma#maiora draws#my “cypers are fun” agenda is working *rubs evil mittens together*#dice found family#<- that's the hill im dying on#anyway ive decided his name is big red and he has a 7 gimmick because dice puns#i do love how you doubled down on it being “borinb” it made me double check my own code and got me giggling/pos#congrats on being to read shit on tumbr compression quality#ouma kokichi

499 notes

·

View notes

Text

apex legends kill code part 4 & text posts

#apex legends#play apex#octane#duardo silva#lifeline#ajay che#loba andrade#revenant#kaleb cross#valkyrie#kairi imahara#mad maggie#margaret kohere#apex kill code#kill code part 4#text post meme#apex meme#hey guys… is octane ok. like is anyone gonna check in on him#boy is facing IMMENSE trauma and betrayal rn

278 notes

·

View notes

Text

mariana : ''out of all the creators i think that i like slime the best , hes very funny and very crazy and i get along with him very well , i want to make love with him ''

@qfliporiana

#this clips is from solarkarii on twt!#go check xem out /threat#oh god i haye coding <- what im doing rn#phantom shrieks#qsmp#qsmp slimecicle#qsmp mariana#slimeriana#fliporiana#OHHH I LOVE THEM SO MUCH#this is the gay agenda#i dont even care that they want to have sex with eachother its okah#they deserve it#HE CALLS SLIME HANDSOME???

1K notes

·

View notes

Text

what if we kissed in front of a giant vat of veal stock?

#sydcarmy#syd x carmy#carmen x sydney#my art#the bear fanart#illustration#artists on tumblr#fanart#black and white#let's never put the veal stock on the tippy top shelf again#but also “wanna check on the veal stock” could be their code for “let's make out”

239 notes

·

View notes

Text

"hey I need a favor rq"

this is based off this:

#ghost: “gladly”#price: “come again?”#Ghost will do it with zero hesitation bcuz Ghost and Raven hates each other((sibling coded))#Price checks Raven up every 2 seconds because he is a worrywart#i encourage everyone to draw their oc or yourself being squish by your fav cod characters as well#Raven's living MY DREAM#*BANGS HAND ON THE TABLE* I JUST WANT A PAIR OF BICEPS TO POP MY HEAD OFF TOO#mb its like 2am im really not normal rn#feral on main? happens more likely than you think#gummmyart#doodle#simon ghost riley#captain john price#my oc#my oc art#cod oc#cod oc art#[oc]Raven#Raven[oc]#PriceRaven#GhostRaven#simon ghost riley x oc#john price x oc#captain price x oc

168 notes

·

View notes

Text

the most cursed au of all.

#rain code#mdarc#master detective archives#there's a lot more to this unfortunately. theres so much more of this#abcd art#halara nightmare#desuhiko thunderbolt#fubuki clockford#vivia twilight#sorry desuhiko is awful. it will continue#(ok check the reblogs for the full post)

169 notes

·

View notes

Text

The most terrifying creature of all

[First] Prev <--> Next

#poorly drawn mdzs#mdzs#wei wuxian#lan jingyi#lan sizhui#ouyang zizhen#nameless red disciple#Absolutely none of these people should ever be allowed in a haunted house#Except maybe LSZ#The juniors would either 1) Cry 2) punch one of the staff 3) pee a little bit 4) break something in fear. Dreadful patrons.#and they are supposed to be in training for dealing with the supernatural too! SMH!#I did enjoy drawing them cuddled up though B*)#WWX is banned from haunted houses too because he tries to get himself involved as one of the scare-ers. Which is a violation of union code#He's 'trying to make the experience more enriching' but in reality he just likes to get a reaction out of people.#Truly a guy you go to a corn maze with only to get separated from him.#And when you do find him he's face down what looks like his own blood#As you try and check to see if he's okay he suddenly grabs your ankles and starts spitting lines from the exorcist#Then laughs when you scream#Truly a guy who is NOT getting a second date#Next episode features something very very scary. Universally so.#a t-t-t-teen girl.#LJY is curled up on the ground btw. Joining the JC sock puppet that has also been just out of sight this entire time

767 notes

·

View notes