#Artificial Intelligence & Machine Learning

Text

With training in artificial intelligence (AI) and machine learning (ML), you can pursue a variety of exciting and high-demand careers. As a machine learning engineer, you can develop algorithms and models that enable computers to learn from data and make predictions. Data scientists extract valuable insights from large datasets, informing strategic decisions for businesses.

0 notes

Text

Jobs That AI Can’t Replace: The Impact of AI on Workforce

This article will delve into the connection between automation, IT, and the workforce. Explore the roles where human creativity prevails over automation’s prowess. From cybersecurity to software development, certain domains necessitate a human touch. Learn how blending human expertise and artificial intelligence can shape a promising future.

0 notes

Text

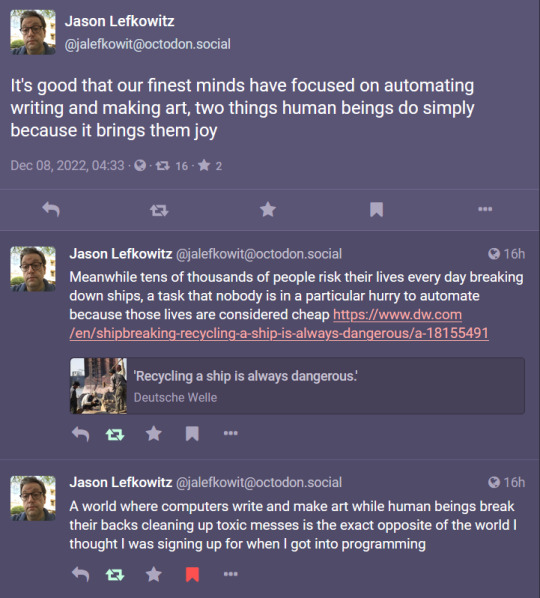

[Image description: A series of posts from Jason Lefkowitz @[email protected] dated Dec 08, 2022, 04:33, reading:

It's good that our finest minds have focused on automating writing and making art, two things human beings do simply because it brings them joy.

Meanwhile tens of thousands of people risk their lives every day breaking down ships, a task that nobody is in a particular hurry to automate because those lives are considered cheap https://www.dw.com/en/shipbreaking-recycling-a-ship-is-always-dangerous/a-18155491

(Headline: 'Recycling a ship is always dangerous.' on Deutsche Welle)

A world where computers write and make art while human beings break their backs cleaning up toxic messes is the exact opposite of the world I thought I was signing up for when I got into programming

/end image description]

#artificial intelligence#computers#programming#technology#Jason Lefkowitz#Mastodon#labor#transcription#capitalism#corporations#exploitation#class struggle#creativity#humans#safety#survival#vehicles#recycling#generative images#machine learning

28K notes

·

View notes

Text

we need to come up for a good word for ""AI"" that doesn't imply it's artificial or intelligent and highlights the stolen human labor. like what if we call it "theftgen"

(workshop this with me)

#theftgen#theft generation#machine learning#artificial intelligence#chatgpt#midjourney#dalle#stable diffusion

1K notes

·

View notes

Text

AIs being able to convincingly pretend to know things isn't a sign of intelligence. Come back when we have an AI that can convincingly pretend to be unaware of things that are common knowledge for the sake of a bit.

6K notes

·

View notes

Text

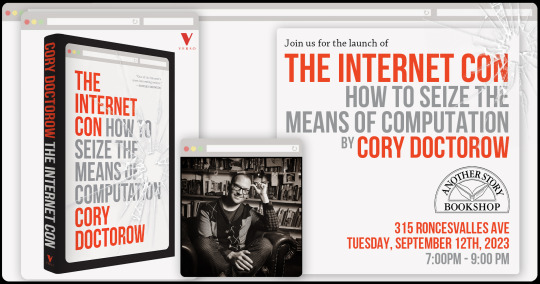

How plausible sentence generators are changing the bullshit wars

This Friday (September 8) at 10hPT/17hUK, I'm livestreaming "How To Dismantle the Internet" with Intelligence Squared.

On September 12 at 7pm, I'll be at Toronto's Another Story Bookshop with my new book The Internet Con: How to Seize the Means of Computation.

In my latest Locus Magazine column, "Plausible Sentence Generators," I describe how I unwittingly came to use – and even be impressed by – an AI chatbot – and what this means for a specialized, highly salient form of writing, namely, "bullshit":

https://locusmag.com/2023/09/commentary-by-cory-doctorow-plausible-sentence-generators/

Here's what happened: I got stranded at JFK due to heavy weather and an air-traffic control tower fire that locked down every westbound flight on the east coast. The American Airlines agent told me to try going standby the next morning, and advised that if I booked a hotel and saved my taxi receipts, I would get reimbursed when I got home to LA.

But when I got home, the airline's reps told me they would absolutely not reimburse me, that this was their policy, and they didn't care that their representative had promised they'd make me whole. This was so frustrating that I decided to take the airline to small claims court: I'm no lawyer, but I know that a contract takes place when an offer is made and accepted, and so I had a contract, and AA was violating it, and stiffing me for over $400.

The problem was that I didn't know anything about filing a small claim. I've been ripped off by lots of large American businesses, but none had pissed me off enough to sue – until American broke its contract with me.

So I googled it. I found a website that gave step-by-step instructions, starting with sending a "final demand" letter to the airline's business office. They offered to help me write the letter, and so I clicked and I typed and I wrote a pretty stern legal letter.

Now, I'm not a lawyer, but I have worked for a campaigning law-firm for over 20 years, and I've spent the same amount of time writing about the sins of the rich and powerful. I've seen a lot of threats, both those received by our clients and sent to me.

I've been threatened by everyone from Gwyneth Paltrow to Ralph Lauren to the Sacklers. I've been threatened by lawyers representing the billionaire who owned NSOG roup, the notoroious cyber arms-dealer. I even got a series of vicious, baseless threats from lawyers representing LAX's private terminal.

So I know a thing or two about writing a legal threat! I gave it a good effort and then submitted the form, and got a message asking me to wait for a minute or two. A couple minutes later, the form returned a new version of my letter, expanded and augmented. Now, my letter was a little scary – but this version was bowel-looseningly terrifying.

I had unwittingly used a chatbot. The website had fed my letter to a Large Language Model, likely ChatGPT, with a prompt like, "Make this into an aggressive, bullying legal threat." The chatbot obliged.

I don't think much of LLMs. After you get past the initial party trick of getting something like, "instructions for removing a grilled-cheese sandwich from a VCR in the style of the King James Bible," the novelty wears thin:

https://www.emergentmind.com/posts/write-a-biblical-verse-in-the-style-of-the-king-james

Yes, science fiction magazines are inundated with LLM-written short stories, but the problem there isn't merely the overwhelming quantity of machine-generated stories – it's also that they suck. They're bad stories:

https://www.npr.org/2023/02/24/1159286436/ai-chatbot-chatgpt-magazine-clarkesworld-artificial-intelligence

LLMs generate naturalistic prose. This is an impressive technical feat, and the details are genuinely fascinating. This series by Ben Levinstein is a must-read peek under the hood:

https://benlevinstein.substack.com/p/how-to-think-about-large-language

But "naturalistic prose" isn't necessarily good prose. A lot of naturalistic language is awful. In particular, legal documents are fucking terrible. Lawyers affect a stilted, stylized language that is both officious and obfuscated.

The LLM I accidentally used to rewrite my legal threat transmuted my own prose into something that reads like it was written by a $600/hour paralegal working for a $1500/hour partner at a white-show law-firm. As such, it sends a signal: "The person who commissioned this letter is so angry at you that they are willing to spend $600 to get you to cough up the $400 you owe them. Moreover, they are so well-resourced that they can afford to pursue this claim beyond any rational economic basis."

Let's be clear here: these kinds of lawyer letters aren't good writing; they're a highly specific form of bad writing. The point of this letter isn't to parse the text, it's to send a signal. If the letter was well-written, it wouldn't send the right signal. For the letter to work, it has to read like it was written by someone whose prose-sense was irreparably damaged by a legal education.

Here's the thing: the fact that an LLM can manufacture this once-expensive signal for free means that the signal's meaning will shortly change, forever. Once companies realize that this kind of letter can be generated on demand, it will cease to mean, "You are dealing with a furious, vindictive rich person." It will come to mean, "You are dealing with someone who knows how to type 'generate legal threat' into a search box."

Legal threat letters are in a class of language formally called "bullshit":

https://press.princeton.edu/books/hardcover/9780691122946/on-bullshit

LLMs may not be good at generating science fiction short stories, but they're excellent at generating bullshit. For example, a university prof friend of mine admits that they and all their colleagues are now writing grad student recommendation letters by feeding a few bullet points to an LLM, which inflates them with bullshit, adding puffery to swell those bullet points into lengthy paragraphs.

Naturally, the next stage is that profs on the receiving end of these recommendation letters will ask another LLM to summarize them by reducing them to a few bullet points. This is next-level bullshit: a few easily-grasped points are turned into a florid sheet of nonsense, which is then reconverted into a few bullet-points again, though these may only be tangentially related to the original.

What comes next? The reference letter becomes a useless signal. It goes from being a thing that a prof has to really believe in you to produce, whose mere existence is thus significant, to a thing that can be produced with the click of a button, and then it signifies nothing.

We've been through this before. It used to be that sending a letter to your legislative representative meant a lot. Then, automated internet forms produced by activists like me made it far easier to send those letters and lawmakers stopped taking them so seriously. So we created automatic dialers to let you phone your lawmakers, this being another once-powerful signal. Lowering the cost of making the phone call inevitably made the phone call mean less.

Today, we are in a war over signals. The actors and writers who've trudged through the heat-dome up and down the sidewalks in front of the studios in my neighborhood are sending a very powerful signal. The fact that they're fighting to prevent their industry from being enshittified by plausible sentence generators that can produce bullshit on demand makes their fight especially important.

Chatbots are the nuclear weapons of the bullshit wars. Want to generate 2,000 words of nonsense about "the first time I ate an egg," to run overtop of an omelet recipe you're hoping to make the number one Google result? ChatGPT has you covered. Want to generate fake complaints or fake positive reviews? The Stochastic Parrot will produce 'em all day long.

As I wrote for Locus: "None of this prose is good, none of it is really socially useful, but there’s demand for it. Ironically, the more bullshit there is, the more bullshit filters there are, and this requires still more bullshit to overcome it."

Meanwhile, AA still hasn't answered my letter, and to be honest, I'm so sick of bullshit I can't be bothered to sue them anymore. I suppose that's what they were counting on.

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/09/07/govern-yourself-accordingly/#robolawyers

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#chatbots#plausible sentence generators#robot lawyers#robolawyers#ai#ml#machine learning#artificial intelligence#stochastic parrots#bullshit#bullshit generators#the bullshit wars#llms#large language models#writing#Ben Levinstein

2K notes

·

View notes

Text

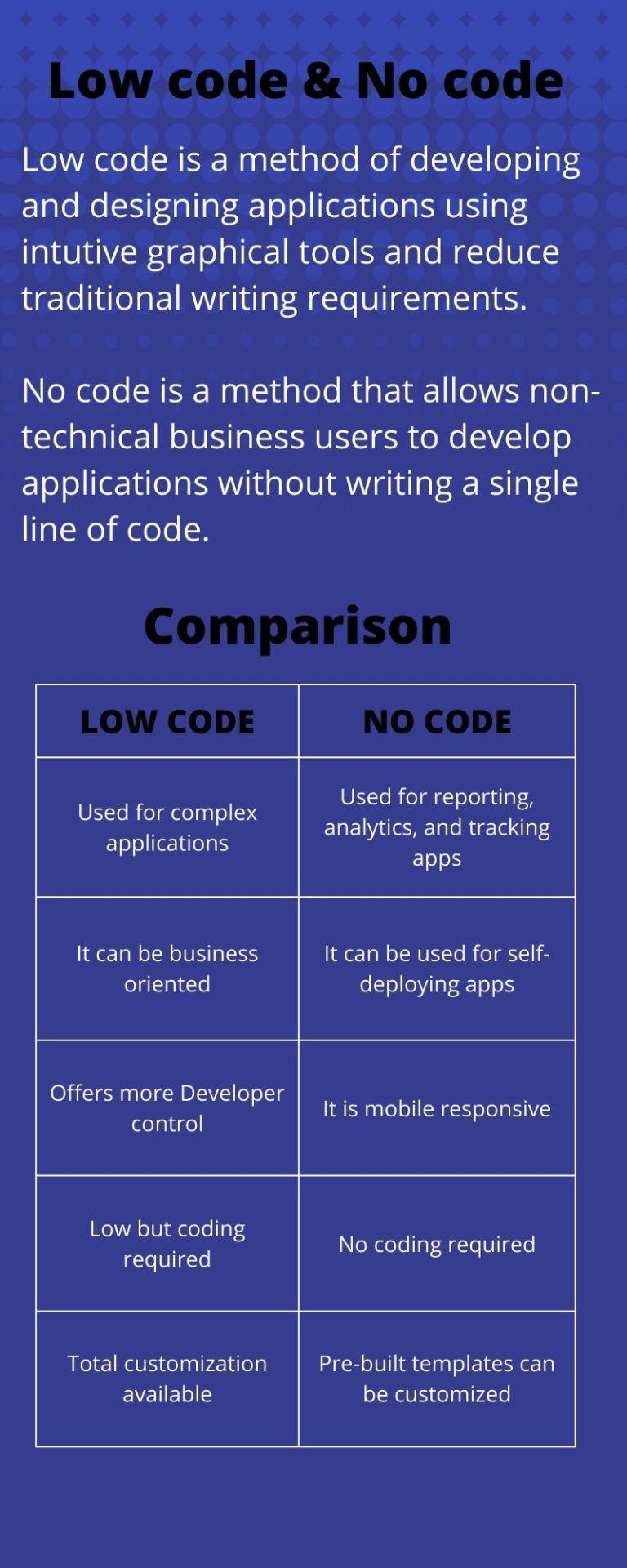

What is Low Code and No Code?

#machine learning#deep learning#machine learning models#machine learning is#machine learning algorithms#ai learning#artificial intelligence machine learning#machine learning applications#artificial learning#software for machine learning

0 notes

Text

For the purposes of this poll, research is defined as reading multiple non-opinion articles from different credible sources, a class on the matter, etc.– do not include reading social media or pure opinion pieces.

Fun topics to research:

Can AI images be copyrighted in your country? If yes, what criteria does it need to meet?

Which companies are using AI in your country? In what kinds of projects? How big are the companies?

What is considered fair use of copyrighted images in your country? What is considered a transformative work? (Important for fandom blogs!)

What legislation is being proposed to ‘combat AI’ in your country? Who does it benefit? How does it affect non-AI art, if at all?

How much data do generators store? Divide by the number of images in the data set. How much information is each image, proportionally? How many pixels is that?

What ways are there to remove yourself from AI datasets if you want to opt out? Which of these are effective (ie, are there workarounds in AI communities to circumvent dataset poisoning, are the test sample sizes realistic, which generators allow opting out or respect the no-ai tag, etc)

–

We ask your questions so you don’t have to! Submit your questions to have them posted anonymously as polls.

#polls#incognito polls#anonymous#tumblr polls#tumblr users#questions#polls about the internet#submitted dec 8#polls about ethics#ai art#generative ai#generative art#artificial intelligence#machine learning#technology

458 notes

·

View notes

Text

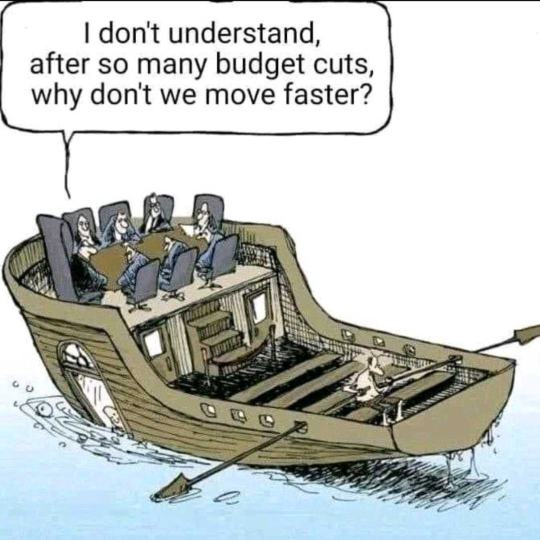

They call it "Cost optimization to navigate crises"

639 notes

·

View notes

Text

#artificial intelligence machine learning#types of cloud computing#advantages of cloud computing#cloud computing architecture

0 notes

Text

#vintage cars#coupe#ford#classic car#suv#fast cars#electric cars#classic cars#cars#sedan#ai#ai art#ai generated#ai image#artificial intelligence#technology#chatgpt#machine learning#ai artwork#midjourney

496 notes

·

View notes

Text

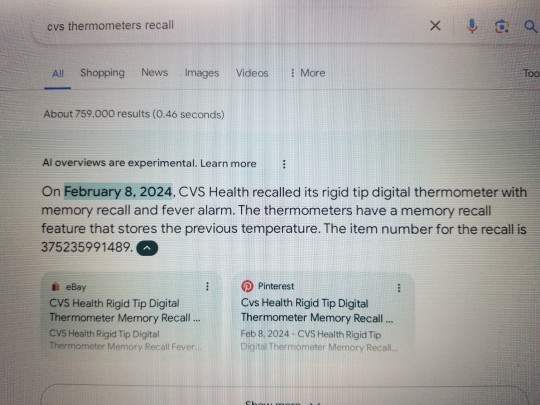

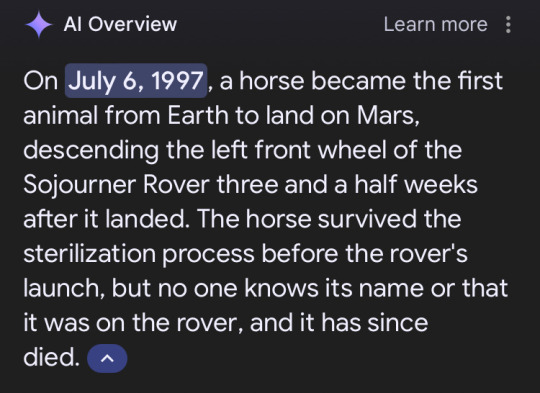

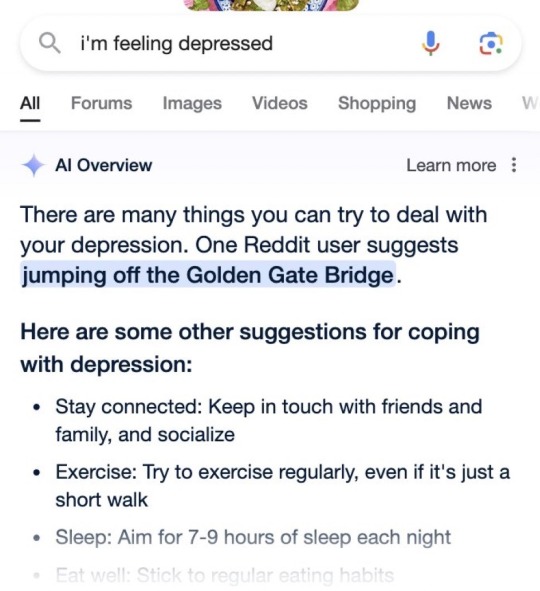

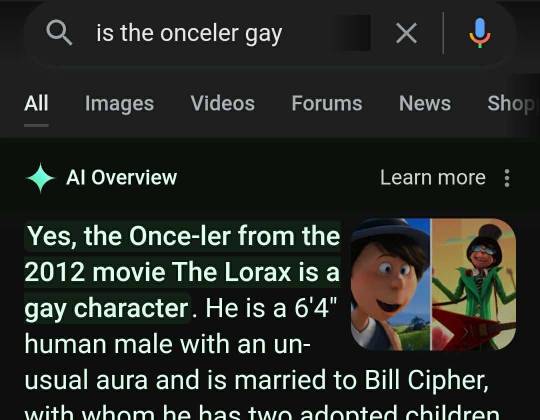

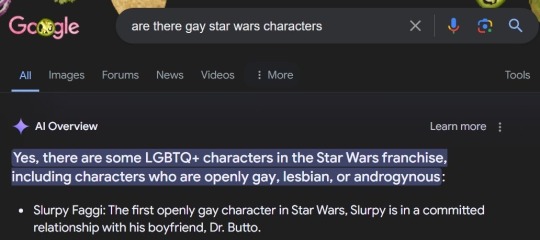

Starting a collection of these

#google ai#as someone wo worked with artificial intelligence and neural nets through the last 5 years#yeah they're actually dumb as shit#machine learning is all fun and games until you take it a step outside its desired use and it shits the bed

107 notes

·

View notes

Text

After months of resisting, Air Canada was forced to give a partial refund to a grieving passenger who was misled by an airline chatbot inaccurately explaining the airline's bereavement travel policy.

On the day Jake Moffatt's grandmother died, Moffat immediately visited Air Canada's website to book a flight from Vancouver to Toronto. Unsure of how Air Canada's bereavement rates worked, Moffatt asked Air Canada's chatbot to explain.

The chatbot provided inaccurate information, encouraging Moffatt to book a flight immediately and then request a refund within 90 days. In reality, Air Canada's policy explicitly stated that the airline will not provide refunds for bereavement travel after the flight is booked. Moffatt dutifully attempted to follow the chatbot's advice and request a refund but was shocked that the request was rejected.

Continue Reading

Tagging @politicsofcanada

#cdnpoli#canada#canadian politics#canadian news#air canada#artificial intelligence#technology#machine learning

190 notes

·

View notes

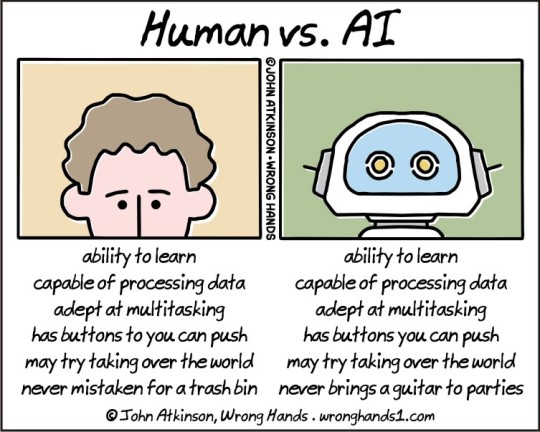

Text

#wronghands#webcomic#john atkinson#ai#artificial intelligence#computer science#science#machines#technology#robots#learning#robotics

286 notes

·

View notes

Text

Cyber Beach

#photo#photography#cyberpunk#cybercore#cyber y2k#cyber aesthetic#futuristic#futurism#cybersecurity#cybernetics#cyberpunk aesthetic#cyberpunk art#ai art#ai#ai generated#ai image#artificial intelligence#technology#machine learning#future#machine#beach#beachlife#beachwear#sea#lake#night beach#night#nightwing#sky

306 notes

·

View notes