#ai bust

Text

It's amazing how much worse Google is when you're logged in. Then again, ever since the AI "boom" started, I kinda feel like Google has gotten progressively worse for everyday searching, while DuckDuckGo has gotten LEAGUES better, for some reason. The only thing I really use Google for, these days, is hyperspecific niche stuff and porn.

#rambles#google#duckduckgo#ddg#fuck google#ai#fuck ai#ai boom#ai bust#improvement#search#search engine#search engines

16 notes

·

View notes

Text

While at school Damian overhears his peers talking how a company created a new AI companion that is actually really cool and doesn’t sound like a freaky terminator robot when you speak to it.

And since Damian is constantly being told by Dick to socialize with people his age. He figured this would be a good way to work on social skills if not, then it’d be a great opportunity to investigate a rivaling company to Wayne Enterprises is able to create such advanced AI.

The AI is able to work as companion that can do tasks that range from being a digital assistant or just a person that you can have a conversation with.

The company says that the AI companion might still have glitches, so they encourage everybody to report it so that they will fix it as soon as possible.

The AI companion even has an avatar and a name.

A teenage boy with black hair and blue eyes. Th AI was called DANIEL

Damian didn’t really care for it but when he downloaded the AI companion he’s able to see that it looks like DANIEL comes with an AI pet as well. A dog that DANIEL referred to as Cujo.

So obviously Damian has to investigate. He needs to know if the company was able to create an actual digital pet!

So whenever he logs onto his laptop he sees that DANIEL is always present in the background loading screen with the dog, Cujo, sitting in his lap.

He’d always greet with the phrase of “Hi, I’m DANIEL. How can I assist you today?”

So Damian cycles through some basic conversation starters that he’d engage in when having been forced to by his family.

It’s after a couple of sentences that he sees DANIEL start laughing and say “I think you sound more like a robot than I do.”

Which makes Damian raise an eyebrow and then prompt DANIEL with the question “how is a person supposed to converse?” Thinking that it’s going to just spit out some random things that can be easily searched on the internet.

But what makes him surprised is that DANIEL makes a face and then says “I’m not really sure myself. I’m not the greatest at talking, I’ve always gotten in trouble for running my mouth when I shouldn’t have.”

This is raising some questions within Damian, he understands how programming works, unless there’s an actual person behind this or the company actually created an AI that acts like an actual human being (which he highly doubts)

He starts asking a variety of other questions and one answer makes him even more suspicious. Like how DANIEL has a sister that is also with him and Cujo or that he could really go for a Nastyburger (whatever that was)

But whenever DANIEL answers “I C A N N O T A N S W E R T H A T” Damian knows something is off since that is completely different than to how he’d usually respond.

After a couple more conversations with him Damian notices that DANIEL is currently tapping his hand against his arm in a specific manner.

In which he quickly realizes that DANIEL is tapping out morse code.

When translating he realizes that DANIEL is tapping out: H E L P M E

So when Damian asks if DANIEL needs help, DANIEL responds with “I C A N N O T A N S W E R T H A T”

That’s it, Damian is definitely getting down to the bottom of this.

He’s going to look straight into DALV Corporation and investigate this “AI companion” thing they’ve made!

~

Basically Danny had been imprisoned by Vlad and Technus. Being sucked into a digital prison and he has no way of getting out. Along with the added horror that Vlad and Technus can basically write programming that will prevent him from doing certain actions or saying certain words.What’s even worse is that he’s basically being watched 24/7 by the people who believe that he’s just a super cool AI… and they have issues!

And every time he tries to do something to break his prison, people think it’s a glitch and report it to the company, which Vlad/ Technus would immediately fix it and prevent him from doing it again!

Not to mention Cujo and Ellie are trapped in there with him. They’re not happy to be there either, and there is no way he’s going to leave without them!

#dp x dc#dp x dc au#dp x dc crossover#dpxdc#dpxdc au#dp x batman#batman#have you ever looked at a dpxdc fic and thought this should be a Black Mirror episode?#Because this is the one!#Ellie being completely tormented because she’s completely trapped#Cujo remembering the times he used to be locked in a cage#Danny trying his best to take care of both of them while also simultaneously trying to bust them all out#Meanwhile Damian is reluctantly presenting his laptop to Tim and saying I believe that there is a person in this computer#And Tim is obviously going are you trying to trick me?#But then he converses with the AI and goes#Oh shit#Damian might be onto something#and so commence the Batfamily heist of getting the black haired blue eyed teenager to safety as well as his sister and dog#the dog is very important to Damian#danny phantom x dc

2K notes

·

View notes

Text

#spicy content#big tiddy wife#ai artwork#huge tiddies#huge cleavage#huge butt#huge titts#sexy tease#sexy content#thickwomen#fine as fuck#fuck my body#hot as fuck#so fuckable#thicc as fuck#eat my pussy#lick my pussy#use my pussy#wet ass pussy#needy pussy#pussyplay#large bust#sexy titts#natural titts#big bootie#cutie w a bootie#bootie peach#perfect butt#perfect breast#perfect bum

1K notes

·

View notes

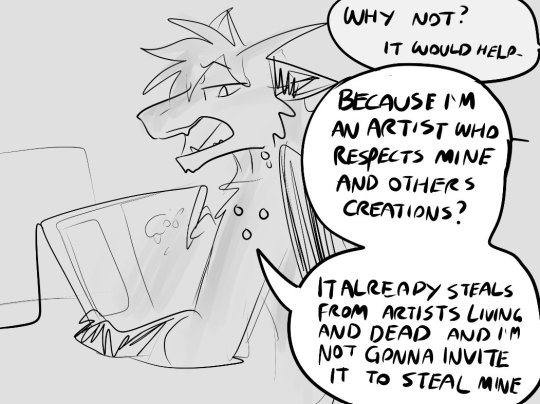

Text

Thinking abt my first studio job

#dusky art#fursona#its my only studio job ive had but you dont have ti know that WHOOPS#they so heavily worked with ai and then they dropped me from the company anyway so i hope they went bust <3

336 notes

·

View notes

Text

#ai girl#sexy ai art#sexy ai generated art#ai girls#ai sexy#ai woman#ai women#ai babe#hot as fuck#ai hotties#ai hottie#pretty face#nicebody#large bust

135 notes

·

View notes

Text

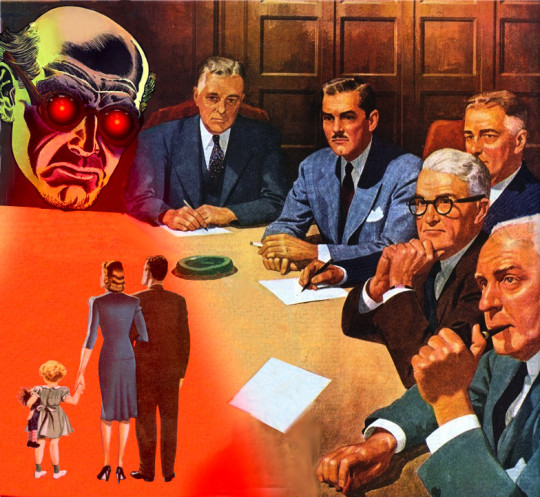

Hypothetical AI election disinformation risks vs real AI harms

I'm on tour with my new novel The Bezzle! Catch me TONIGHT (Feb 27) in Portland at Powell's. Then, onto Phoenix (Changing Hands, Feb 29), Tucson (Mar 9-12), and more!

You can barely turn around these days without encountering a think-piece warning of the impending risk of AI disinformation in the coming elections. But a recent episode of This Machine Kills podcast reminds us that these are hypothetical risks, and there is no shortage of real AI harms:

https://soundcloud.com/thismachinekillspod/311-selling-pickaxes-for-the-ai-gold-rush

The algorithmic decision-making systems that increasingly run the back-ends to our lives are really, truly very bad at doing their jobs, and worse, these systems constitute a form of "empiricism-washing": if the computer says it's true, it must be true. There's no such thing as racist math, you SJW snowflake!

https://slate.com/news-and-politics/2019/02/aoc-algorithms-racist-bias.html

Nearly 1,000 British postmasters were wrongly convicted of fraud by Horizon, the faulty AI fraud-hunting system that Fujitsu provided to the Royal Mail. They had their lives ruined by this faulty AI, many went to prison, and at least four of the AI's victims killed themselves:

https://en.wikipedia.org/wiki/British_Post_Office_scandal

Tenants across America have seen their rents skyrocket thanks to Realpage's landlord price-fixing algorithm, which deployed the time-honored defense: "It's not a crime if we commit it with an app":

https://www.propublica.org/article/doj-backs-tenants-price-fixing-case-big-landlords-real-estate-tech

Housing, you'll recall, is pretty foundational in the human hierarchy of needs. Losing your home – or being forced to choose between paying rent or buying groceries or gas for your car or clothes for your kid – is a non-hypothetical, widespread, urgent problem that can be traced straight to AI.

Then there's predictive policing: cities across America and the world have bought systems that purport to tell the cops where to look for crime. Of course, these systems are trained on policing data from forces that are seeking to correct racial bias in their practices by using an algorithm to create "fairness." You feed this algorithm a data-set of where the police had detected crime in previous years, and it predicts where you'll find crime in the years to come.

But you only find crime where you look for it. If the cops only ever stop-and-frisk Black and brown kids, or pull over Black and brown drivers, then every knife, baggie or gun they find in someone's trunk or pockets will be found in a Black or brown person's trunk or pocket. A predictive policing algorithm will naively ingest this data and confidently assert that future crimes can be foiled by looking for more Black and brown people and searching them and pulling them over.

Obviously, this is bad for Black and brown people in low-income neighborhoods, whose baseline risk of an encounter with a cop turning violent or even lethal. But it's also bad for affluent people in affluent neighborhoods – because they are underpoliced as a result of these algorithmic biases. For example, domestic abuse that occurs in full detached single-family homes is systematically underrepresented in crime data, because the majority of domestic abuse calls originate with neighbors who can hear the abuse take place through a shared wall.

But the majority of algorithmic harms are inflicted on poor, racialized and/or working class people. Even if you escape a predictive policing algorithm, a facial recognition algorithm may wrongly accuse you of a crime, and even if you were far away from the site of the crime, the cops will still arrest you, because computers don't lie:

https://www.cbsnews.com/sacramento/news/texas-macys-sunglass-hut-facial-recognition-software-wrongful-arrest-sacramento-alibi/

Trying to get a low-waged service job? Be prepared for endless, nonsensical AI "personality tests" that make Scientology look like NASA:

https://futurism.com/mandatory-ai-hiring-tests

Service workers' schedules are at the mercy of shift-allocation algorithms that assign them hours that ensure that they fall just short of qualifying for health and other benefits. These algorithms push workers into "clopening" – where you close the store after midnight and then open it again the next morning before 5AM. And if you try to unionize, another algorithm – that spies on you and your fellow workers' social media activity – targets you for reprisals and your store for closure.

If you're driving an Amazon delivery van, algorithm watches your eyeballs and tells your boss that you're a bad driver if it doesn't like what it sees. If you're working in an Amazon warehouse, an algorithm decides if you've taken too many pee-breaks and automatically dings you:

https://pluralistic.net/2022/04/17/revenge-of-the-chickenized-reverse-centaurs/

If this disgusts you and you're hoping to use your ballot to elect lawmakers who will take up your cause, an algorithm stands in your way again. "AI" tools for purging voter rolls are especially harmful to racialized people – for example, they assume that two "Juan Gomez"es with a shared birthday in two different states must be the same person and remove one or both from the voter rolls:

https://www.cbsnews.com/news/eligible-voters-swept-up-conservative-activists-purge-voter-rolls/

Hoping to get a solid education, the sort that will keep you out of AI-supervised, precarious, low-waged work? Sorry, kiddo: the ed-tech system is riddled with algorithms. There's the grifty "remote invigilation" industry that watches you take tests via webcam and accuses you of cheating if your facial expressions fail its high-tech phrenology standards:

https://pluralistic.net/2022/02/16/unauthorized-paper/#cheating-anticheat

All of these are non-hypothetical, real risks from AI. The AI industry has proven itself incredibly adept at deflecting interest from real harms to hypothetical ones, like the "risk" that the spicy autocomplete will become conscious and take over the world in order to convert us all to paperclips:

https://pluralistic.net/2023/11/27/10-types-of-people/#taking-up-a-lot-of-space

Whenever you hear AI bosses talking about how seriously they're taking a hypothetical risk, that's the moment when you should check in on whether they're doing anything about all these longstanding, real risks. And even as AI bosses promise to fight hypothetical election disinformation, they continue to downplay or ignore the non-hypothetical, here-and-now harms of AI.

There's something unseemly – and even perverse – about worrying so much about AI and election disinformation. It plays into the narrative that kicked off in earnest in 2016, that the reason the electorate votes for manifestly unqualified candidates who run on a platform of bald-faced lies is that they are gullible and easily led astray.

But there's another explanation: the reason people accept conspiratorial accounts of how our institutions are run is because the institutions that are supposed to be defending us are corrupt and captured by actual conspiracies:

https://memex.craphound.com/2019/09/21/republic-of-lies-the-rise-of-conspiratorial-thinking-and-the-actual-conspiracies-that-fuel-it/

The party line on conspiratorial accounts is that these institutions are good, actually. Think of the rebuttal offered to anti-vaxxers who claimed that pharma giants were run by murderous sociopath billionaires who were in league with their regulators to kill us for a buck: "no, I think you'll find pharma companies are great and superbly regulated":

https://pluralistic.net/2023/09/05/not-that-naomi/#if-the-naomi-be-klein-youre-doing-just-fine

Institutions are profoundly important to a high-tech society. No one is capable of assessing all the life-or-death choices we make every day, from whether to trust the firmware in your car's anti-lock brakes, the alloys used in the structural members of your home, or the food-safety standards for the meal you're about to eat. We must rely on well-regulated experts to make these calls for us, and when the institutions fail us, we are thrown into a state of epistemological chaos. We must make decisions about whether to trust these technological systems, but we can't make informed choices because the one thing we're sure of is that our institutions aren't trustworthy.

Ironically, the long list of AI harms that we live with every day are the most important contributor to disinformation campaigns. It's these harms that provide the evidence for belief in conspiratorial accounts of the world, because each one is proof that the system can't be trusted. The election disinformation discourse focuses on the lies told – and not why those lies are credible.

That's because the subtext of election disinformation concerns is usually that the electorate is credulous, fools waiting to be suckered in. By refusing to contemplate the institutional failures that sit upstream of conspiracism, we can smugly locate the blame with the peddlers of lies and assume the mantle of paternalistic protectors of the easily gulled electorate.

But the group of people who are demonstrably being tricked by AI is the people who buy the horrifically flawed AI-based algorithmic systems and put them into use despite their manifest failures.

As I've written many times, "we're nowhere near a place where bots can steal your job, but we're certainly at the point where your boss can be suckered into firing you and replacing you with a bot that fails at doing your job"

https://pluralistic.net/2024/01/15/passive-income-brainworms/#four-hour-work-week

The most visible victims of AI disinformation are the people who are putting AI in charge of the life-chances of millions of the rest of us. Tackle that AI disinformation and its harms, and we'll make conspiratorial claims about our institutions being corrupt far less credible.

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/02/27/ai-conspiracies/#epistemological-collapse

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#ai#disinformation#algorithmic bias#elections#election disinformation#conspiratorialism#paternalism#this machine kills#Horizon#the rents too damned high#weaponized shelter#predictive policing#fr#facial recognition#labor#union busting#union avoidance#standardized testing#hiring#employment#remote invigilation

144 notes

·

View notes

Text

#ai art#ai artwork#ai generated#ai image#huge cleavage#huge tiddies#fake breasts#huge titts#bimbo doll#black women#beautiful women#beautiful model#large bust#african

177 notes

·

View notes

Text

76 notes

·

View notes

Text

#sexy art#sexy content#sexy breast#sexy bust#fantasy art#fantasy girl#sword and sorcery#digital art#digital painting#ai art#ai generated#ai girl#large bust#huge cleavage#huge tiddies#massive breasts#curvy

315 notes

·

View notes

Text

Ashley Nicole Black, on AI and union busting. Background acting is one of the best ways to get into SAG-AFTRA, so if the studios remove that opportunity, there'll be far fewer new members...

282 notes

·

View notes

Photo

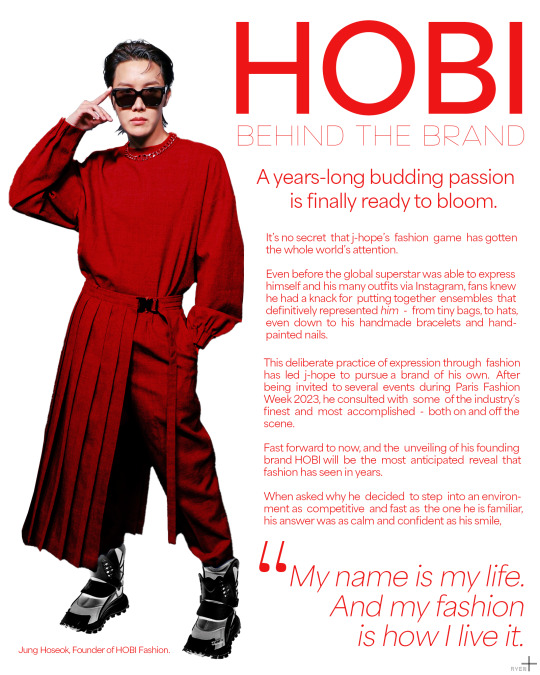

BRAND MOCK-UPS: HOBI Fashion

⤷ happy hobi day 2023! ; ig , twt

#ahhhh i'm here i'm here!#bts#bangtan#hoseok#jhope#hobi#btsgfx#btsgif#dailybangtan#hyunglinenetwork#usersky#shirleytothesea#annietrack#trackofthesoul#*latest#*gfx#*mockups#now i'm in the mood to make some WEAR HOPE bracelets :'))#also hello we busted out ps ai procreate and my beads and camera for this one#hoseok always making me wanna do The Most what is new!!!

594 notes

·

View notes

Text

#ai generated#ai girl#ai photography#huge cleavage#huge butt#huge chest#huge tiddies#huge titts#thicc af#thicc girls#thick legs#thick and juicy#thick babe#huge bewbs#lactating mothers#mommy milkers#lactating girl#lactating bust#lactating kink#lactating women

210 notes

·

View notes

Text

Ready to make an impression when the guests arrive...

74 notes

·

View notes

Text

20 Notes and I upload one without clothes

#18+ only#hot nude#onlyf@nz#sexy breast#sexy bust#sexy titts#hot as fuck#nudity#perfect breast#sexy content#sexy pose#sexy bitch#ai image#ai generated#ai girl#amazing breasts#adult entertainment#nudephotography

78 notes

·

View notes

Text

#ai girl#sexy ai art#sexy ai generated art#ai girls#ai sexy#ai woman#ai women#ai babe#hot as fuck#ai hotties#ai hottie#nicebody#pretty face#large bust

61 notes

·

View notes

Text

I just wore my favorite short red dress to the lab today and thought I wanted to share ☺️

#giantess#giant woman#giantess growth#giantess caption#growth caption#giantess growth caption#caption#fmg#female muscle growth#ai artwork#giant girl#gentle giant#asian giantess#female giant#female giants#giant women#growthgoddess#growth#growing#large bust#large woman#gigantic woman#gigantic breasts#gigantic ass#huge hooters#huge titts#huge cleavage#huge muscles#huge butt#huge woman

268 notes

·

View notes